Propertybook.co.zw is Zimbabwe's largest property portal — consistently ranking 2nd–6th on Google for 4,000+ real estate keywords. They had domain authority and a private database of 7,000+ active listings. What they lacked: any scalable system for turning those assets into quality content.

Their blog was unfocused. Their existing content production process — one freelancer, one article, two weeks, ~$200 each — couldn't scale to their opportunity. And they were clear about what they didn't want: generic AI text that would actively hurt their rankings under Google's EEAT standards.

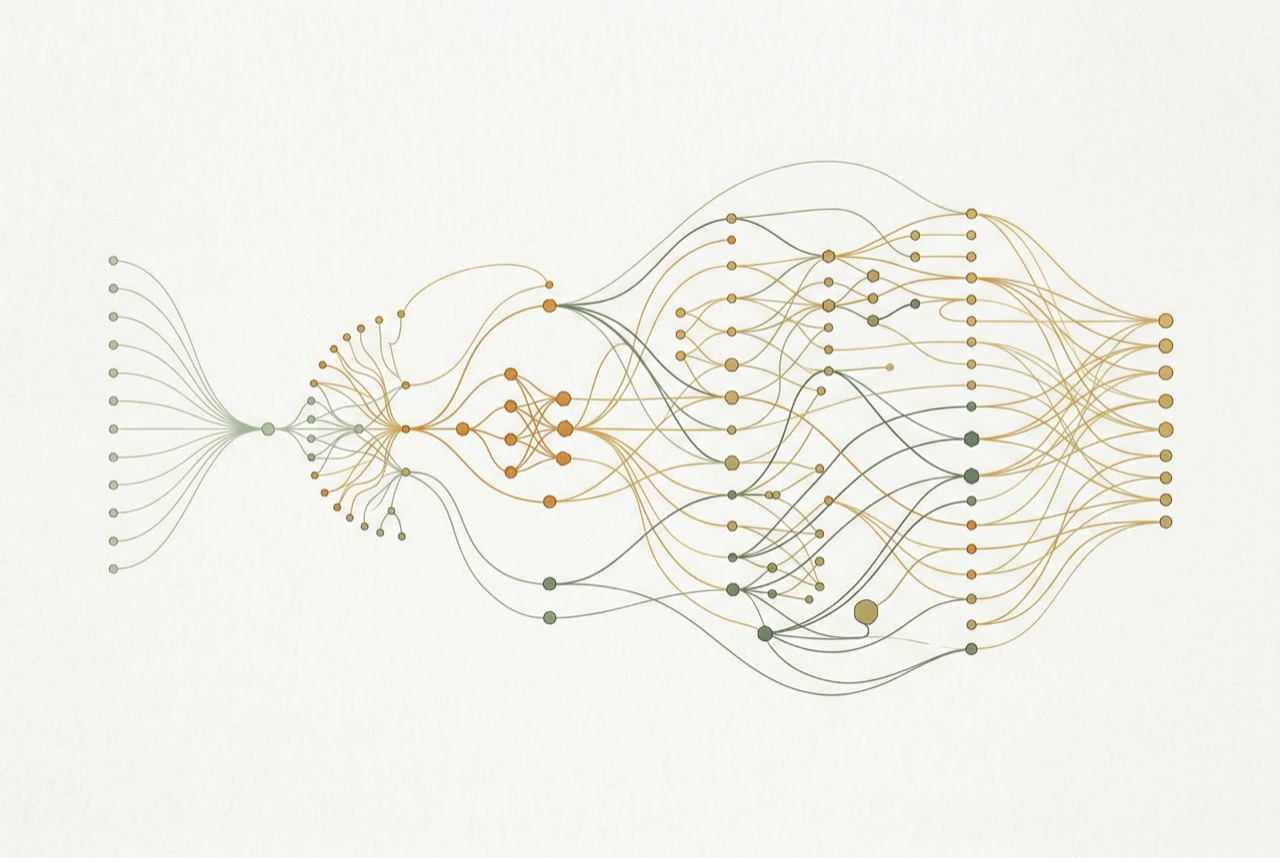

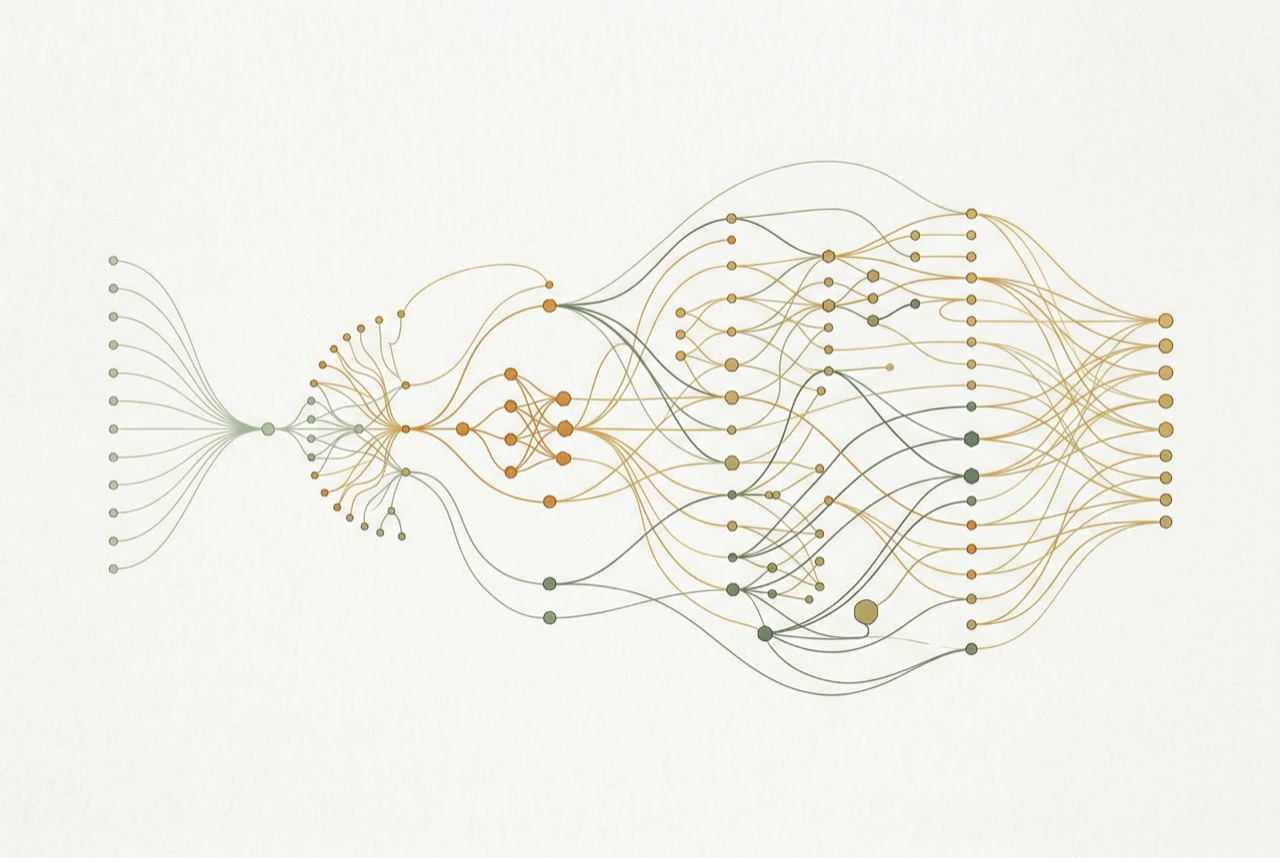

I designed and built a 6-step AI content pipeline on Coda.io, integrating eight external APIs and the client's proprietary property database. The system runs fully autonomously — select a keyword, click start, get a QA-checked article.

GSC + ahrefs APIs identify the highest-ROI keyword from 4,000+ tracked terms based on volume, competition, current ranking, and estimated CTR.

SerpAPI pulls top 5 ranking articles. Scrapestack fetches and parses full content. Claude identifies content gaps, structure patterns, and missing angles.

Claude generates a SQL-like query against the 7,000+ listing database via custom Coda API. Pulls suburb-level metrics, price trends, and property characteristics no competitor can access.

Google Maps API provides location-specific context. Dynamic writing guidelines select the appropriate article format (neighborhood guide, investment analysis, buyer's guide) based on search intent.

Claude generates the article in structured JSON. A second Claude pass performs automated QA: keyword density, EEAT signals, brand voice, factual accuracy against the database.

Status tracked from idea through publication. GSC dashboard monitors each article's clicks, rank, and CTR — feeding back into keyword prioritization.

I built the entire pipeline as a Coda.io prototype before committing to production infrastructure. That meant the client could test the system with real data in days — not months. Every edge case, every API quirk, every prompt failure surfaced cheaply in the prototype stage. By the time we went live, the system was battle-tested.

In this case, the Coda prototype became the production system. It now runs continuously, processing keywords and producing articles on demand.

The system ran over a weekend. Monday morning: 450 fully written, EEAT-optimized, QA-checked articles in the CMS. Each article was unique, each incorporated proprietary database data, and each was structured for the specific search intent of its target keyword.

Six months post-launch: average +2 Google ranking across all published articles. Articles drawing on private database data consistently outperformed generic AI content from competitors.

"Genuinely amazing. Jheesh, well done. And thank you very much. Super chuffed with this."

— Client, Propertybook.co.zwWant the full narrative story of how this was built — in 10 panels?

View the scrollystory